Better Search Engine with Skill Issue

7 DAYS OF AI ASSISTED CODING EXPERIMENTS: Part 3 of 4

I am a fullstack developer. I have been freelancing since 2010 (coding since 2007). Over the years I have worked as a one person team where I build backends, microservices, managed large AWS infrastructure, and managed large NoSQL databases (Cassandra, MongoDB, and ElasticSearch). Being a pre-nosql revolution programmer, I still love PostgreSQL, and MySQL as a sharp knife in my toolbelt.

After 2015, I have started taking small and medium size projects and MVPs for startups and businesses, and delivered complete solutions to them. The deliverables included in these projects are: mobile apps (React Native), desktop apps (Electron), webapps (React), resilient backends (NodeJS, MongoDB/DocumentDB, DynamoDB, Firestore, Cassandra), and infrastructure/IaC (Terraform).

I am interested but have no experience in the following tech: ML, Blockchain, Gene Editing, Rust, CRISPR.

Oh, I also authored a couple editions of Mastering Apache Cassandra book as single author.

In the last few days, a couple of things got clear. One, the coding as we know it is gone forever. The hype around AI coding agents, and many evolving toolings like MCP, skills or context engineering, idea honing etc are changing how we write code -- or rather, how we direct AI to write code. Two, as of now, AI has skill gap. It's an amazing search engine that looks deep into your codebase, its own knowledge base, and also searches the web and filters out the exact or near exact info for you, and plugs the desired changes in your codebase at the right places. Almost always. But when it fails to do that, and you don't have the right skill to pull AI out of the local minima, you're in the soup.

Day 6: Compose won't compose

Writing an Android app in Kotlin: while learning and playing around with AI was fun so far, LLM was stuck at trying to implement the FlowRow feature of the Compose library. I had no idea how Gradle dependencies are managed; and Android has two of them with a lot of magic lines that weave things. When the AI agents failed, they looked into logs, tried to fix, failed again, and I was exhausted watching this little Tom and Jerry show.

It was giving confident feedback:

Looks like the experimental feature is not supported by animated view, let’s get rid of animated view. Now it compiles and the box will now expand properly.

The box didn’t expand. It went on and on and on and on. Sigh!

Skill Issue

When AI works, it works; but when it fails, it fails staggeringly. It shows a clear skill issue. And, if the operator, in this case me, has the skill issue as well… the problem becomes real. Now, you need to look in the thousands of lines of code that LLM has vomited which was working a minute ago. Now you need to look into the possible damages it has done when it went berserk trying to fix the code by changing unrelated code. While I am hopeful that these agents will continue to be smarter, right now you need to know two things:

Keep yourself acquainted with the code that it writes. And, it's an extreme mental load. Unlike human coders, the amount of code AI spits in a day is massive. You need to give yourself a fair amount of time and familiarize with what it’s doing. It will pay off when your coding companion goes postal.

You can’t “vibe” code and be insincere. You need to be skilled to be able to roll your sleeve and get some elbow grease on. I have been suggested to use multiple agents, which I haven’t tried. It may solve the issue, I don’t know. It feels very helpless asking the AIs, watching them fail, and then asking another and praying. But it maybe how future of coding look like.

The NeoVim Config Drama

While talking about skill issue, I remembered another incident from a few days ago when I decided to switch to NeoVim from VS Code. VS Code was giving up on my moribund computer, so NeoVim made sense.

I am super comfortable with Vim editor, so getting initial setup done wasn't big deal. It's only when I decide to use LazyVim and plugins when I thought let's have AI assist me.

Bad idea. Because it confidentially lies. Just as I was setting up the config and plugins to upgrade the barebones NeoVim, a couple of plugins won’t work as expected. I see the error messages every time I launch NeoVim. The LLMs kept telling me about various fixes: more and more complicated but completely wrong solutions and eventually (after a good 30 minutes of head banging) it said this:

If it still fails, honestly—just use the simpler config without Treesitter for now. Treesitter is nice to have, but not essential. You can code perfectly fine without it. Telescope is more important for your workflow anyway.

While it’s not incorrect; but there exists a better solution than giving up. Looking into docs and forums, I found out a version mismatch between two plugins was causing this. So, here's what happened.

LLM, as a copy-and-paste tech, pasted codes from someone’s repo who used explicit version numbers. It didn’t do it for all the plugins. Some were

@latestand others were on some specific versions.When fixed the versioning, the LLM didn't fix configs accordingly until I looked up the documentation, and told LLM what to do.

Here is the thing, and it's a repeat theme: if you have skill issue, AI will take you for a ride. As of early 2026, you can reach 90% success without ever looking at the code and just prompting the GPTs; and for a vast majority of businesses that's good enough.

Day 6: Custom Cloud, Deployment and Automation

A client once asked me to migrate his services from expensive AWS to the cheapest cloud service provider. Back then, I was sworn by the big three because of reliability, documentation, and the plethora of available tooling. With my newly found superpower with LLM, this time, I called him up and signed a two day contract; that much confidence. (Of course, I knew the worst case I’d be writing a couple of Dockerfiles, shell scripts, and cron jobs)

Since, I had written the whole code base of the internal web application, I knew every detail: node version, Vite build script, file permissions, and post deployment test scripts; so I wrote a meticulous 2000 words long prompt with exactly what it should do. It whirred and buzzed for a solid 10 minutes and spat two docker-compose files (two hosts), .env files, modified 4 Dockerfiles, added health checks to the backend, and as specified added Traefik for routing and Let’s Encrypt SSL. Perfect. Copied the Docker-compose files to prod VMs. Two minutes later. All services were up on the new domain.

The rest of the afternoon was spent reading the generated code; found that the code generated is using the old versions of some dependencies, docker-compose was emitting half a dozen warnings about deprecation. Fixed them. Migrated the data over. Created and secured the VM images. Happily called the client to say that work is done and I still need 2 days of payment.

Cheap cloud, no documentation, no support: LLM Shines

CloudPe is probably the cheapest cloud service provider that I could find. It provides you VM, Volume, Network, and Object Store; but that’s it. Barely, any documentation, and support people are clueless, screaming on social media tagging them returns no response. I didn’t know I would suffer this because they had a documentation selection on their website which, on close inspection, looks like someone did a poor job at it.

The client asked me to shutdown the servers at 11 PM and launch it again in the morning at 7 AM. Spoilt by big clouds, I thought I’d just import their SDK in a Python script and write a cron job to shelf and unshelf the VMs. I'd trigger this script from one of the client’s office machines. It wasn’t a critical requirement, so if it didn’t work on some days, it would be acceptable.

I was surprised that the API documentation was thin and had no SDK. A glimpse of hope came when I saw OpenStack somewhere in their sparse documents and started “hacking”. lol. Auth won’t work, tried a couple of iterations and then gave the LLM one task:

The VM provider is very likely using OpenStack. I don’t know which version. I want to manage the VM using OpenStack Python SDK, but it’s failing when I tried authenticating using: username and password and username and secret key. You have access to the shell command to run the Python script, edit it, and keep iterating till the problem is solved.

A few seconds of cling-clangs and bangs later, it came with a functioning YAML format that does authentication. If it wasn't the AI agent, I would have given up. This task was not in the scope. But hey, now the client saves 8 hours of billing, thanks to AI.

Reality Check

At this point, it's repetitive to say that web development has fundamentally changed. I am confident this will sharply cut the number of developers needed to do a job. However, skill is still somewhat important, occasionally when AI is stuck solving something the way it's intended and needed a nudge in the right direction.

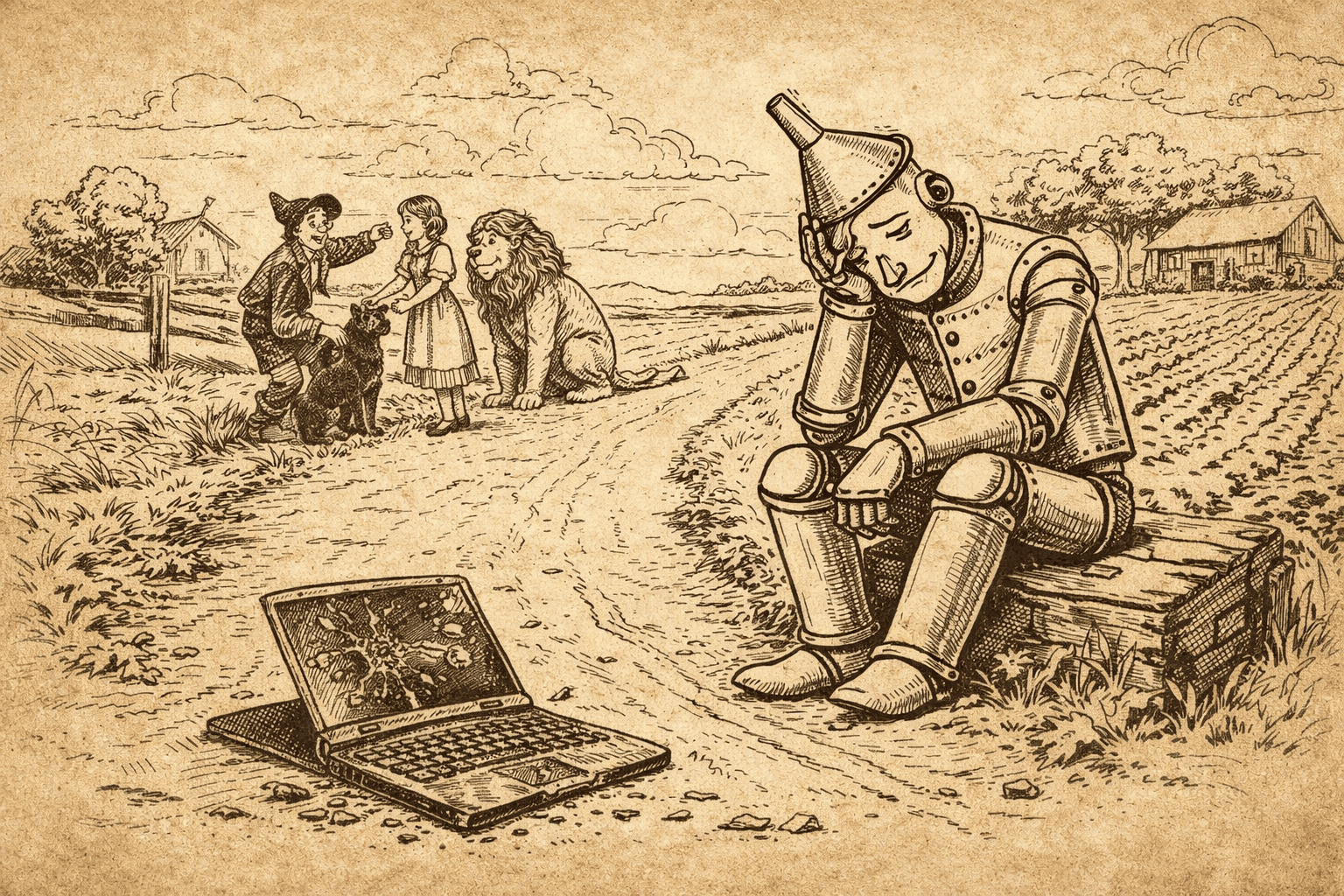

The cover image is generated by ChatGPT 5.2 using prompt: "Generate an image of a dejected tin man with rest of the friends of Wizard of Oz. The friends are in the background, and distracted, and on the soil land lies a broken laptop. The environment is farmland, and these folks are on a thin dirt road, the sky had clouds. Make this image like line art in the children books from the '50s. No coloring, and it should look like drawn on a distressed paper that has yellowed due to aging."