Production Grade Bare-metal Kubernetes with AI

7 DAYS OF AI ASSISTED CODING EXPERIMENTS: Part 4 of 4

I am a fullstack developer. I have been freelancing since 2010 (coding since 2007). Over the years I have worked as a one person team where I build backends, microservices, managed large AWS infrastructure, and managed large NoSQL databases (Cassandra, MongoDB, and ElasticSearch). Being a pre-nosql revolution programmer, I still love PostgreSQL, and MySQL as a sharp knife in my toolbelt.

After 2015, I have started taking small and medium size projects and MVPs for startups and businesses, and delivered complete solutions to them. The deliverables included in these projects are: mobile apps (React Native), desktop apps (Electron), webapps (React), resilient backends (NodeJS, MongoDB/DocumentDB, DynamoDB, Firestore, Cassandra), and infrastructure/IaC (Terraform).

I am interested but have no experience in the following tech: ML, Blockchain, Gene Editing, Rust, CRISPR.

Oh, I also authored a couple editions of Mastering Apache Cassandra book as single author.

So far I've experimented using LLMs to do coding on my behalf. Day 1 through 3 were green field projects: a React Native app, a marketing website, an OCR and processing webapp. Glitters and gold. Day 4 was a somewhat successful refactory of a full stack webapp originally written 10 years ago in 2016. Day 5 was learning and writing an native Android app in Kotlin and interfacing with system APIs. Day 6 was firefighting with LLMs to do the right thing that it had confidently done wrong. And, also, basic cloud automation.

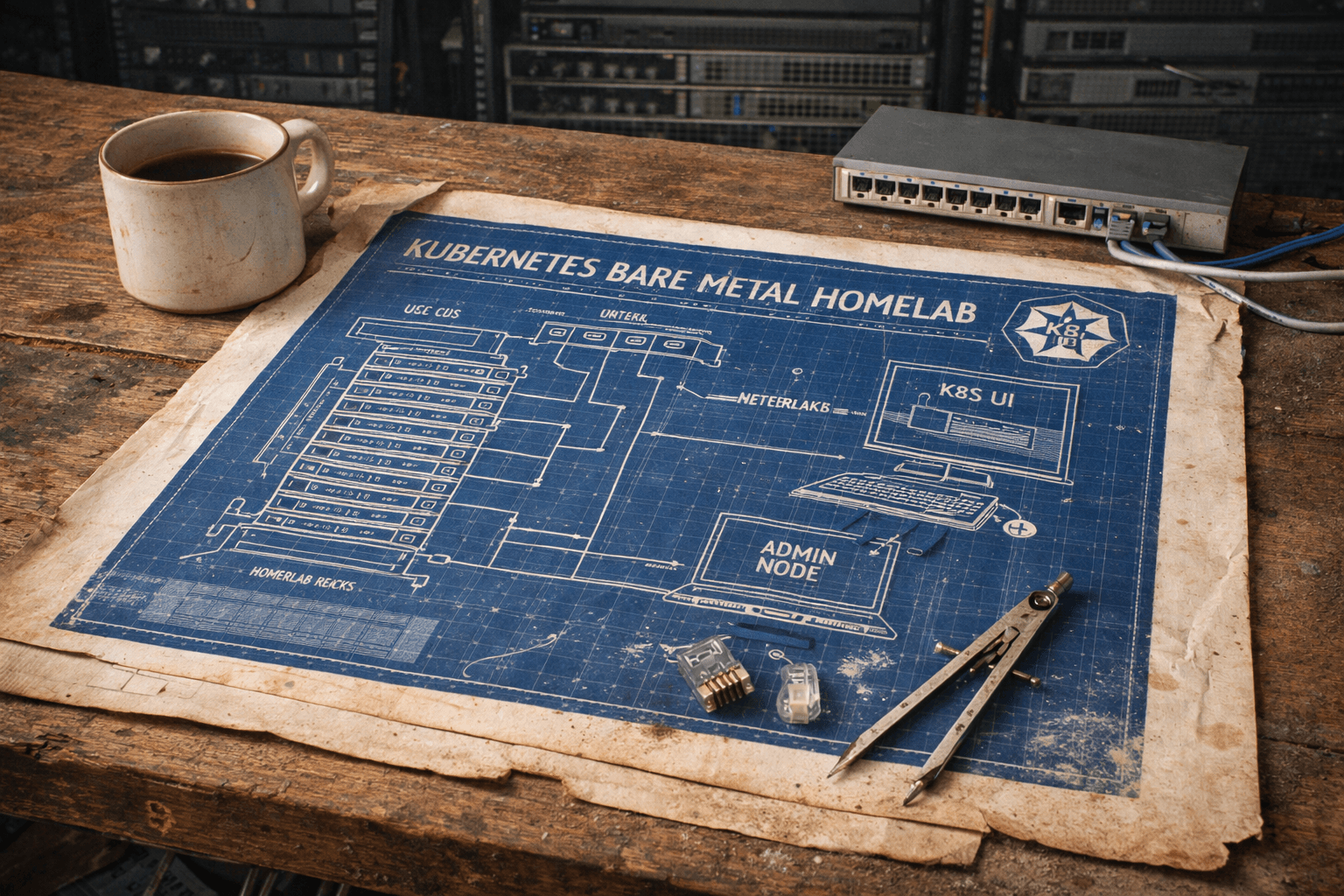

What left of things that I do professionally is to set-up a production grade bare metal infra. So, let's do it. When I started I thought it would easily take more than a day, but I was impressed by the amount of progress I was able to make in one day.

Day 7: Bare Metal Cluster with Ansible, and K3S: DevOps Galore

Back in 2022, I was working on a really messy on prem Kubernetes (K8S) cluster: customized Rancher, outdated Kubernetes components, host OSes were inconsistent. All these problems, you don’t see in managed Kubernetes instances; but this also gave me encouragement to have my own bare metal K8s cluster. In excitement, I backed a Turing Pi cluster board on Kickstarter for Raspberry Pi Compute Module. Big Mistake!

To those who need recalling, Covid was still raging and had shaken the industry. There was a huge gap between demand and supply. Scalpers were charging 10x for cheap Raspberry Pis. Long story short, eventually, in the late 2023, I got the cluster board, two Raspberry Pi compute modules (CM4), and bought two powerful SBCs from Turing Pi called RK1 with 16G RAM, 6TOPs NPU, and powerful Rockchip RK3588 SoC 8-core (Cortex-A76x4+ Cortex-A55x4) 64-bit CPU. I quickly set up a K3s cluster, a quick and dirty GitOps. Basically, a functioning Kubernetes cluster, but nothing that I might be proud of. Partially because I was busy with other work, and partially because the early enthusiasm of mid ‘22 died by late ‘23.

Enter LLM

Dusting my wardrobe full of “I once was crazy about it” hobbies, I found my Turing Pi cluster. Excited by the speed of execution in the last six days, I decided to do the production grade K3S. And, it wasn't an easy ride. Definitely not without AI. It would have taken me a week to do what I did in 8 hours.

Getting the OS right

Initially, I had DietPi on CM4s and as I started adding things, I realized it’s missing a lot of tooling required for K8s, and utilities needed to debug. LLM confirmed my bias. Since RK1s were on Ubuntu 22.04, I got the CM4s loaded with its flavor of Ubuntu 22.04. Ansible did the job, yet I couldn’t see the mass storage on the CM4 nodes.

Demystifying dmesg

DietPi was showing an SSD, but when I flashed the CM4 nodes with Ubuntu 22.04 (Raspberry Pi edition), it didn’t show up. dmesg didn’t have anything interesting. Google failed, the Turing Pi forum couldn’t be much of help, and everyone was pointing to possible loose connection. So, I decided to give the whole “display message / dmesg” log to a LLM, and it said:

PCIe SATA controller detected

But its I/O BARs all failed to assign

Only the memory BAR (BAR 5) was successfully assigned.

This is a known issue on ARM/BCM2711 — the PCIe host bridge doesn't support I/O space, so legacy I/O BARs (which the ASM1061 AHCI/IDE controller needs) can't be mapped.

Result: No SATA drive (sda) ever appeared

So the SATA controller (and presumably an SSD behind it) is physically present and detected by the PCI layer, but the drive isn't enumerable because the I/O BAR assignments failed.

It did provide me with a way to fix this issue, which may not work. And, mentioned updating to Ubuntu 24.04, which is what I was thinking to do before knowing the issue; but surely I wouldn’t have been able to explain what went wrong with v 22.04!

Fixing Ansible script

My handwritten Ansible wasn’t perfect but it did the job. The only problem was I had to go to each each device and bootstrap it by creating nishant user, copying my Public SSH Key for passwordless login, and set static IP before I run my script to run Avahi mDNS, and mount the mass storage devices (SSD and NVMe).

I asked the AI agent to write a temporary Ansible script that uses default credentials to log in to devices using their DHCP assigned IPs, and then do the one time setting:

Create user

Upload my public key for passwordless login

Set static IPs outside the DHCP allocation range

Install, and run Avahi mDNS

Mount the mass-storage appropriates depending on which node we are on.

Delete the default user.

Within a few seconds, I had the throwable script without much effort.

A long streak of debugging to get the perfect K3s cluster!

Let me spare you the details, but the following few hours went on discussing, and debugging with LLMs to understand and come up with a solid set of Ansible… Here is a quick laundry list of things that was done.

Ansible installed K3s server on one, and K3s agents on the rest. Disabled the built-in Traefik, ServiceLB, and set TLS SAN. Added appropriate labels. Grabbed kubeconfig and save it to local

~/.kube/Got Helm to install ArgoCD and configured my Github’s repo as secret to get GitOps going

And, finally, applied yaml for Application type, indicating

k3s/appsdirectory will have all the definitions for future “children apps”.Initially, I used Helm to get MetalLB, Traefik (HTTP → HTTPS redirect, LoadBalancer type, cross-namespace IngressRoute enabled), Kube-Prometheus-Stack, and cert-manager. At this moment, I withheld installing Let’s Encrypt as issuer and Cloudflared

With a bit of debugging and going through configuration with AI help, the cluster was up and running.

kubectl get pods -A | grep -v Running | grep -v Completed

Returns nothing. Which is good.

Configuring ArgoCD: App of Apps

ArgoCD's "App of Apps" pattern uses a single root Application that points to a directory of other Application manifests. When the root syncs, ArgoCD discovers and creates all child Applications, which then deploy their respective Helm charts or raw manifests.

One of the things that I was worried about was what happens to the existing services? Will they not be managed? Will Argo create duplicate services? I was ready to see what mess it made. Surprisingly, it adopted the existing services and things were working all great.

Distributed Persistent Storage: Longhorn

I needed block storage as two of my nodes had SSD and NVMe devices (and I will be adding more NVMe storage to another node, but have you seen the skyrocketing prices of RAM and storages?!). Local-path locks the pods to the same node. Unfortunately, it wasn’t as easy as I was thinking. I learnt that I needed open-iscsi and nfs-common installed. A little fstab editing, and configuring in the UI later, things got working.

Getting TLS working with Cloudflare DNS

This part was relatively smooth given the process requires multiple jumps: cert-manager → cloudflare API → _acme-challenge.mydomainname.com → Let’s Encrypt verified via public DNS → certificate issued, stored as K8s secret → Trefik IngressRoute references the secret for TLS.

Never dealt with a cert-manager, so I was worried about manual renewal or setting up a cron job to do that. And, then I asked the LLM, and it said:

It reads the cert itself. X.509 certs have a notAfter field baked in. cert-manager checks this and renews at 2/3 of the validity period by default — Let's Encrypt issues 90-day certs, so renewal triggers at ~day 60.

cert-manager creates a

CertificateRequest→ completes DNS-01 → gets new certIt updates the Secret in-place (mydomain-wildcard-tls in the traefik namespace)

Traefik watches Secrets and hot-reloads the cert automatically — no restart needed

First catastrophe: Flannel VXLAN Failure after reboot

Just as I was trying to relax after the config so far, I decided to simulate a power cycle; so I switched off the power supply and turned it back on. For some reason all pods on all nodes except Node2, were stuck in ContainerCreating state. The error looked like this:

Failed to create pod sandbox: plugin type="flannel" failed (add):

failed to load flannel 'subnet.env' file:

open /run/flannel/subnet.env: no such file or directory

Long story short: K3s server on Node1 took a long time to come back, as AI says, “Reconciling bootstrap data between datastore and disk”. Meanwhile K3s-agents were past Flannel init phase, and couldn’t get subnet allocation, and stayed permanently stuck. (I am highly skeptical of this explanation, but I’ll research about it later)

I rebooted the cluster and this happened again. So, LLM was confidently lying. Since it’s a small cluster, and I envisioned (and retested over reboots; it happens every now and then) this might repeat, I asked LLM to write subnet.env as part of the startup script. Which worked flawlessly, but I must get back to this again.

Weird 404 issue with Graphana, ArgoCD, and Longhorn

Soon after SSL config was completed, things were looking great from DevOps perspective. But all the services were getting empty content in their UI. Looking into XHR requests, they were all getting 404. The reason was the websecure entrypoint in Traefik had this rule:

routes:

- kind: Rule

match: PathPrefix(`/dashboard`) || PathPrefix(`/api`)

services:

- kind: TraefikService

Notice the missing Host condition.Unfortunately, other services were sending requests on /api too and getting a 404. And, this is where I became skeptical about things that AI was doing. I will cover it in another post, but it was spewing so much of almost accurate code that I was losing my grip to review every line, and things like this happen.

Distributed Object Storage: Garage

Installation went smooth, no AI needed. But I had the replication factor (RF) set to 3. I have only two nodes with mass storage, so I changed it to 2, and Garage stopped.

Error: Previous cluster layout has replication factor 3, which is different than the one

in config file (2). The previous cluster layout can be purged, if you know

what you are doing, simply by deleting the `cluster_layout` file in your metadata directory.

Easy peasy: ssh-rm-rf’d those files, deleted the pods, went to get a coffee. Murphy’s law: the pods still had the old config. Enter LLM. It said:

After updating the ConfigMap (RF=3→2), existing pods kept running with the old config. The init container already ran and generated the config from the old template — the live-updated ConfigMap volume mount doesn't re-trigger the init container.

Fix: Manual rollout restart

Reflector: Automatic TLS Secret Distribution

For some reason, Garage was still not using TLS. But I had configured Traefik with one. It turned out that the cert-tls secret must be copied to the service’s namespace that wants to use TLS. It was manual, and I would have to update every time it changes… every 60 days. I posed this question to the AI, and it turned out Reflector can do this for me and it will resync every time it gets updated.

Cluster Alerting with ntfy

I love PagerDuty, but I wanted to see if I can make something self-hosted. AI suggested it, I deployed and configured it. Well, pretty happy with it.

Setting up Cloudflare Tunnel

So far, everything works in my LAN. What if I wanted to expose some services to the outside world? A tunnel would do, but is there a free one in the market? Seems like Cloudflare does provide that service. And, I finally exposed a couple of my services to the shark infested internet. : )

So, that concluded my 9 hours of getting K3s working on my 4 node cluster with help of LLM. I am pretty sure it would have taken at least one frustrating week to get everything right without AI; plain old Google search is just too dumb, but AI is overconfident and makes mistakes. I’ll probably write about the mental overload that it takes to review the AI generated code, and the fatigue that leads to missing “correct looking bad code” due to the sheer volume of it.

I will be doing disaster recovery and system hardening maybe tomorrow or later. I'll hopefully be able to write a post about it too.

Epilogue

The way I see LLMs assisting coding is the same way I felt when Google became really good at helping finding the right articles to assist coding. A lot of people who swear by reading complete reference books for every tech you want to lay your hands on were skeptical of the knowledge these Google-search-and-paste engineers, but eventually that became the de-facto way of coding. A few things stood out of this week long experiments:

At this point, it's comfortably clear that AI assisted coding is here to stay.

You don't need many engineers to do your project. Very likely, that you don't even need multiple teams to code, deploy, reliability, and design -- all you need is one person each or maybe less.

Outsourcing is going to be cheap.

A full-stack engineer is now can do the job of a very capable full team without slowing down.

There will be vibe-coding disasters at scales and it would be not surprising.

SaaS companies will shrink in numbers -- both: the number of companies that exist, and the number of employees in those companies. This is due to the fact that SaaS is one-size fit all, and their clients end up paying for too many undesired features, or end up changing their process to align with the generic SaaS. Now, they can just hire ONE ENGINEER and get their tiny highly specialized service coded and hey! they don't even need to store their data on a third party servers.

Hiring will shrink.